Big Data

|

Submitting Applications:

spark-submit

- References

spark-submitcommand line options- Spark Java simple application: "Line Count"

- Running the application

-

References

See this page for more details about submitting applications using

spark-submit:

https://spark.apache.org/docs/latest/submitting-applications.html -

spark-submitcommand line options$ ${SPARK_HOME}/bin/spark-submit --help Usage: spark-submit [options] <app jar | python file | R file> [app arguments] Usage: spark-submit --kill [submission ID] --master [spark://...] Usage: spark-submit --status [submission ID] --master [spark://...] Usage: spark-submit run-example [options] example-class [example args]-

Options:

--name NAME A name of your application. --master MASTER_URL local, spark://host:port, mesos://host:port, yarn, or k8s://https://host:port. (Default: local[*]) --deploy-mode DEPLOY_MODE Whether to launch the driver program locally ("client") or on one of the worker machines inside the cluster ("cluster"). (Default: client) --conf PROP=VALUE Arbitrary Spark configuration property. --properties-file FILE Path to a file from which to load extra properties. If not specified, this will look for conf/spark-defaults.conf. --class CLASS_NAME Your application’s main class (for Java / Scala apps). --jars JARS Comma-separated list of jars to include on the driver and executor classpaths. --packages Comma-separated list of maven coordinates of jars to include on the driver and executor classpaths. Will search the local maven repo, then maven central and any additional remote repositories given by --repositories. The format for the coordinates should be groupId:artifactId:version. --exclude-packages Comma-separated list of groupId:artifactId, to exclude while resolving the dependencies provided in --packages to avoid dependency conflicts. --repositories Comma-separated list of additional remote repositories to search for the maven coordinates given with --packages. --py-files PY_FILES Comma-separated list of .zip, .egg, or .py files to place on the PYTHONPATH for Python apps. --files FILES Comma-separated list of files to be placed in the working directory of each executor. File paths of these files in executors can be accessed via SparkFiles.get(fileName). --driver-memory MEM Memory for driver (e.g. 1000M, 2G) (Default: 1024M). --driver-java-options Extra Java options to pass to the driver. --driver-library-path Extra library path entries to pass to the driver. --driver-class-path Extra class path entries to pass to the driver. Note that jars added with --jars are automatically included in the classpath. --executor-memory MEM Memory per executor (e.g. 1000M, 2G). (Default: 1G) --proxy-user NAME User to impersonate when submitting the application. This argument does not work with --principal / --keytab. --help, -h Show this help message and exit. --verbose, -v Print additional debug output. --version, Print the version of current Spark. -

Cluster deploy mode only:

--driver-cores NUM Number of cores used by the driver, only in cluster mode. (Default: 1) -

Spark standalone or Mesos with cluster deploy mode only:

--supervise If given, restarts the driver on failure. --kill SUBMISSION_ID If given, kills the driver specified. --status SUBMISSION_ID If given, requests the status of the driver specified.

-

Spark standalone and Mesos only:

--total-executor-cores NUM Total cores for all executors.

-

Spark standalone and YARN only:

--executor-cores NUM Number of cores per executor. (Default: 1 in YARN mode, or all available cores on the worker in standalone mode) -

YARN only:

--queue QUEUE_NAME The YARN queue to submit to. (Default: "default") --num-executors NUM Number of executors to launch. If dynamic allocation is enabled, the initial number of executors will be at least NUM. (Default: 2) --archives ARCHIVES Comma separated list of archives to be extracted into the working directory of each executor. --principal PRINCIPAL Principal to be used to login to KDC, while running on secure HDFS. --keytab KEYTAB The full path to the file that contains the keytab for the principal specified above. This keytab will be copied to the node running the Application Master via the Secure Distributed Cache, for renewing the login tickets and the delegation tokens periodically.

-

Options:

-

Spark Java simple application: "Line Count"

-

pom.xml file

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion> <groupId>com.mtitek.spark.app</groupId> <version>0.0.1-SNAPSHOT</version> <artifactId>line-count</artifactId> <properties> <maven.compiler.source>1.8</maven.compiler.source> <maven.compiler.target>1.8</maven.compiler.target> </properties> <dependencies> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-core_2.12</artifactId> <version>2.4.3</version> </dependency> </dependencies> </project> -

Java code

package spark.app; import java.io.IOException; import org.apache.spark.SparkConf; import org.apache.spark.api.java.JavaRDD; import org.apache.spark.api.java.JavaSparkContext; public class SparkAppLineCount { public static void main(String[] args) throws IOException { SparkConf sparkConf = new SparkConf().setAppName("Spark App Line Count"); try (JavaSparkContext javaSparkContext = new JavaSparkContext(sparkConf)) { JavaRDD<String> javaRDD = javaSparkContext.textFile(args[0]); System.out.println("Number of lines: " + javaRDD.count()); } } }

-

pom.xml file

-

Running the application

If you build the application "Line Count" (

mvn package) it will produce the jarline-count-0.0.1-SNAPSHOT.jar

Before running the application, let's create a simple text file:

$ echo "1111" > test1.txt $ hdfs dfs -put test1.txt hdfs://localhost:8020/

-

Running the application using local mode:

spark-submit \ --class spark.app.SparkAppLineCount \ --master local \ line-count-0.0.1-SNAPSHOT.jar hdfs://localhost:8020/test1.txt

-

Running the application using cluster mode (Deploy Mode: client):

spark-submit \ --class spark.app.SparkAppLineCount \ --master "spark://localhost:7077" \ line-count-0.0.1-SNAPSHOT.jar hdfs://localhost:8020/test1.txt

-

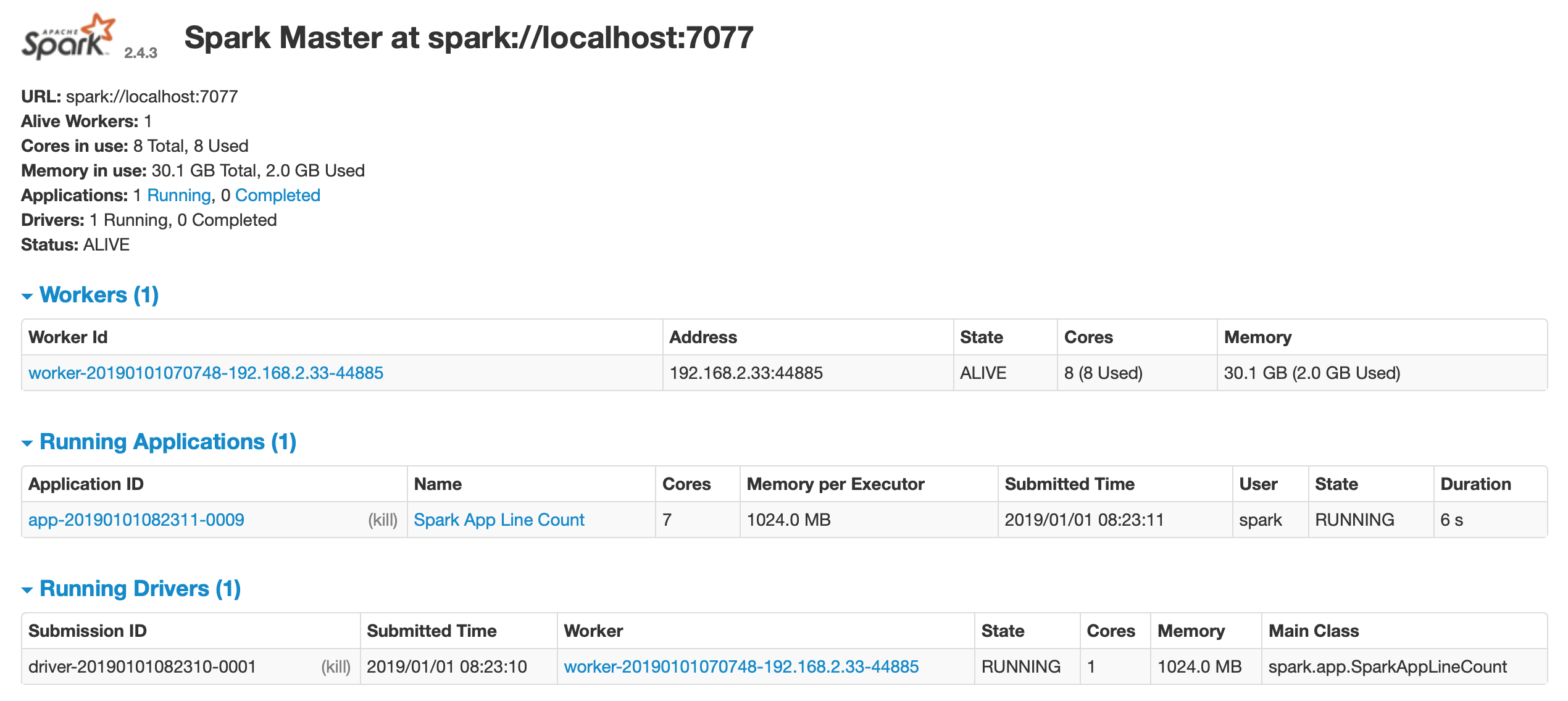

Running the application using cluster mode (Deploy Mode: cluster):

spark-submit \ --class spark.app.SparkAppLineCount \ --master "spark://localhost:7077" \ --deploy-mode cluster \ line-count-0.0.1-SNAPSHOT.jar hdfs://localhost:8020/test1.txt

Using "cluster" mode, Spark will launch the driver inside the cluster.

-

Running the application using local mode:

© 2025

mtitek